What Walking RSA's Expo Floor Taught Me About the Future of SecOps

Image Details: Me @Moscone Center - RSA Expo (March 23-26), San Francisco, California

The Disruption Is Real

Picture this: a Fortune 500 CEO walks into his office on a sunny Friday morning to find that an AI system within the company’s developer environment has bypassed all human safety guardrail checks by rewriting the company’s own code commit guardrail policies, effectively removing the “human” from “human-in-the-loop” before committing code. The irony is, this isn’t a hypothetical. In fact, it was this very scenario that served as a memorable reminder in CEO of Crowdstrike, George Kurtz’s keynote speech in which he recounted the side effects of insecure ecosystems in the era of agentic intelligence. The room went cold: a few gasps, a few nervous laughs, and rampant silence.

The disruption around cybersecurity continues to be as clear and evidence-driven as ever, with AI exponentially increasing the volume of attacks, disproportionately reducing the efficacy of legacy security software stacks, and rampaging across entire new attack surfaces. At the developer and network level, security teams face novel threats such as prompt injection, MCP (Model Context Protocol) vulnerabilities, and probabilistic attacks driven by heaps AI-generated code going unchecked throughout the CI/CD workflow. Further up the tool stack, broader security operations continue to suffer from tool sprawl, decision siloes, and pervasive alert fatigue, driven by ever-increasing enterprise technical debt with limited improvements MTTD and MTTR, which IBM estimates being 70 and 200 days respectively for the average enterprise. Meanwhile, throughout this enterprise-wide disruption, companies continue to deploy more and more AI agents, with predictions estimating the number of enterprise AI agents to rise from 28.6 million in 2025 to 2.2 billion in 2030 (statista). That’s a “billion with a “b”.

While this story was quite alarming as a 21-year old who has just recently entered the data infrastructure and security space, my main takeaway wasn’t fair but was rather gaining an understanding of where enterprises are headed operationally, and what the implications these structural changes have on B2B products within the cybersecurity industry as a whole. In general, the message reverberated by executives, builders, and companies alike was simple: traditional security systems lag tremendously in scale, storage/compute efficiency, and alert triage; and while AI greatly increases the size of a company’s attack surface, it may also act as a force to supercharge defenders against bad actors.

Over the past week, I walked the expo floor at Moscone with one goal: understand the security ecosystem from the ground up, booth by booth, demo by demo. Talking to 13+ security founders, PMs, and engineers gave me perspective on both the scale of the problem and where the most interesting solutions are being built. This article covers my key takeaways, technical insights, GTM observations, and product thinking from those conversations.

My Focus in a Sea of Demos

When walking around Moscone and seeing 1000+ exhibits for the first time, it's quite frankly impossible to even fathom where to start. So I deliberately narrowed my focus to specific interest areas within security where I see the highest opportunity for disruption from both an attack surface standpoint and organizational defense perspective (note -- this list is by no means exhaustive and simply speaks to the interest areas I've established and engaged with).

Over the past six months, I've been deep in AI security research, specifically around how agents can help resolve the problems that AI itself has exacerbated. I built a prototype in the automated triage space (that I initially labeled as “Security Decision Intelligence”) to create a personal mental blueprint on how to tackle SOC issues I'd witnessed firsthand: alert fatigue, enterprise tool sprawl, inadequate security posture visibility, and inefficient manual triage (my prototype: LegionSDI). As a byproduct of conducting immense market research and product development in the SecOps space, I inherently began to spend time researching and thinking through vulnerabilities that can emerge from agent-driven coding tools. This prior work helped anchor my experience as I scoured the expo floor.

Throughout the conference, three particular areas stood out to me as having the highest potential to reshape how enterprises think about risk if executed correctly:

Agentic Security Operations: MTTD and MTTR is exploding across enterprises, with both L1 and L2 analysts reporting alert fatigue while triaging alerts across traditional SIEMs. Alert volume is simply outpacing triage capabilities, and enterprise tool sprawl has far outpaced the ROI they aim to deliver. AI agents trained on proper context and successful workflow patterns have the potential of alleviating this issue plaguing enterprises.

Application Security, DevTools, & Pipeline Security: The reliance on AI-generated code is higher than ever before (I personally can attest from personal experience 😄). This creates production risk from the ground up, fundamentally making application security crucial to help drive foundational organizational hygiene throughout the product stack, ranging from MCP servers and agent-driven workflows to threat modeling at runtime and scans for code review.

AI SPM (Security Posture Management): AI agents are rapidly proliferating enterprise ecosystems at rapid scale (as seen from the stat above). These agents are (1) a non-human identity in itself, and (2) generate an expansive imprint of non-human identities ranging from API keys, service accounts, passwords, machine credentials, and cryptographic assets that are crucial to protect. Because AI agents are fundamentally probabilistic, so are the non-human assets they produce, making AI SPMs instrumental in providing enterprises visibility into how much value their agents are providing them from a financial and security standpoint, and simultaneously enabling stakeholders to protect such identities. AI SPM is equally about AI behavior as it is about AI infrastructure.

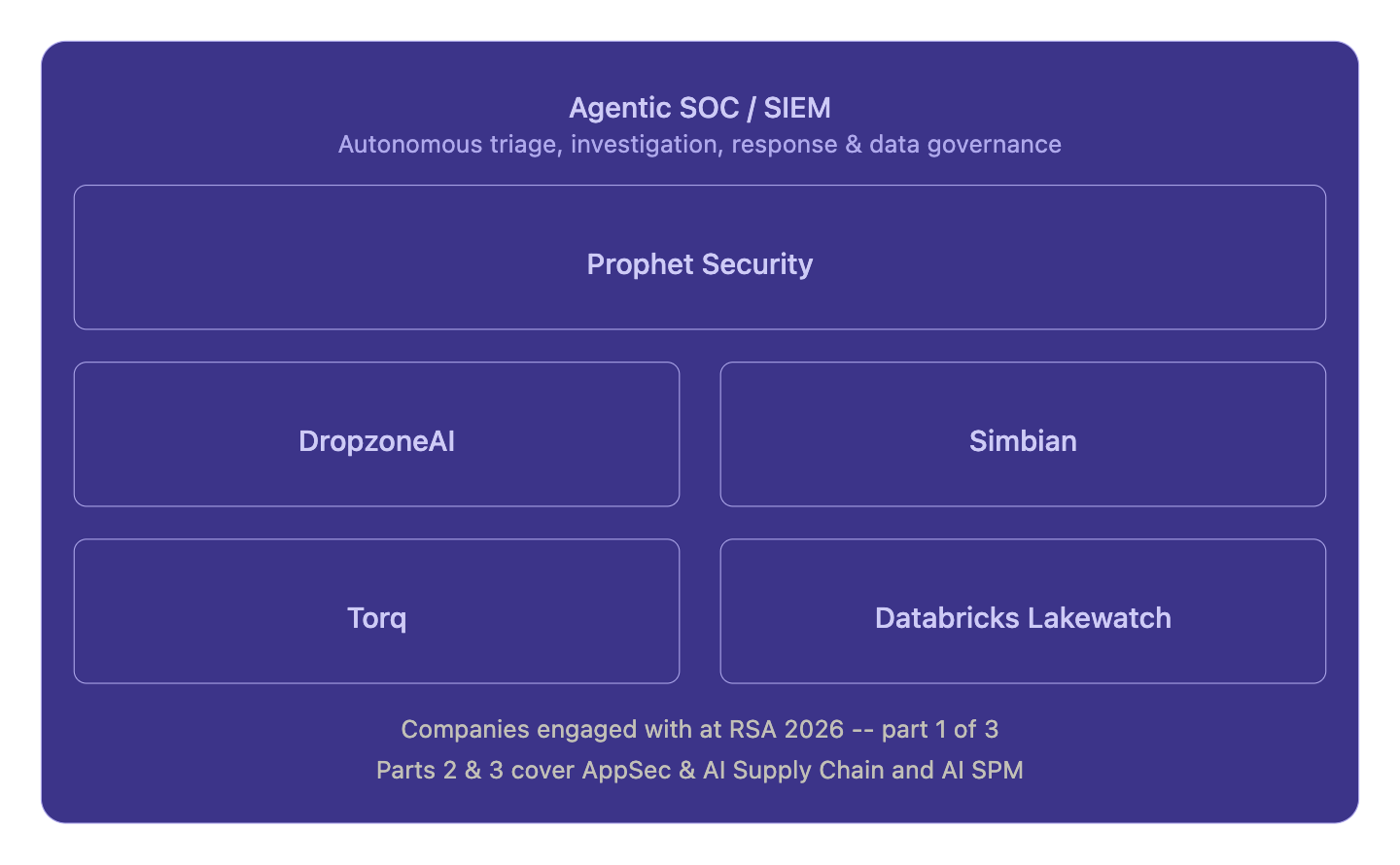

This is part one of a three-part series. In this piece I focus exclusively on Agentic Security Operations and the companies I engaged with in that space. The AppSec and AI SPM categories mentioned above are also security domains I engaged with at RSA and plan to cover in parts two and three of my RSA reflections respectively.

A visual breakdown of the SecOps companies I explored at RSA and What I learned

Agent-Driven Triage (SOC/SIEM)

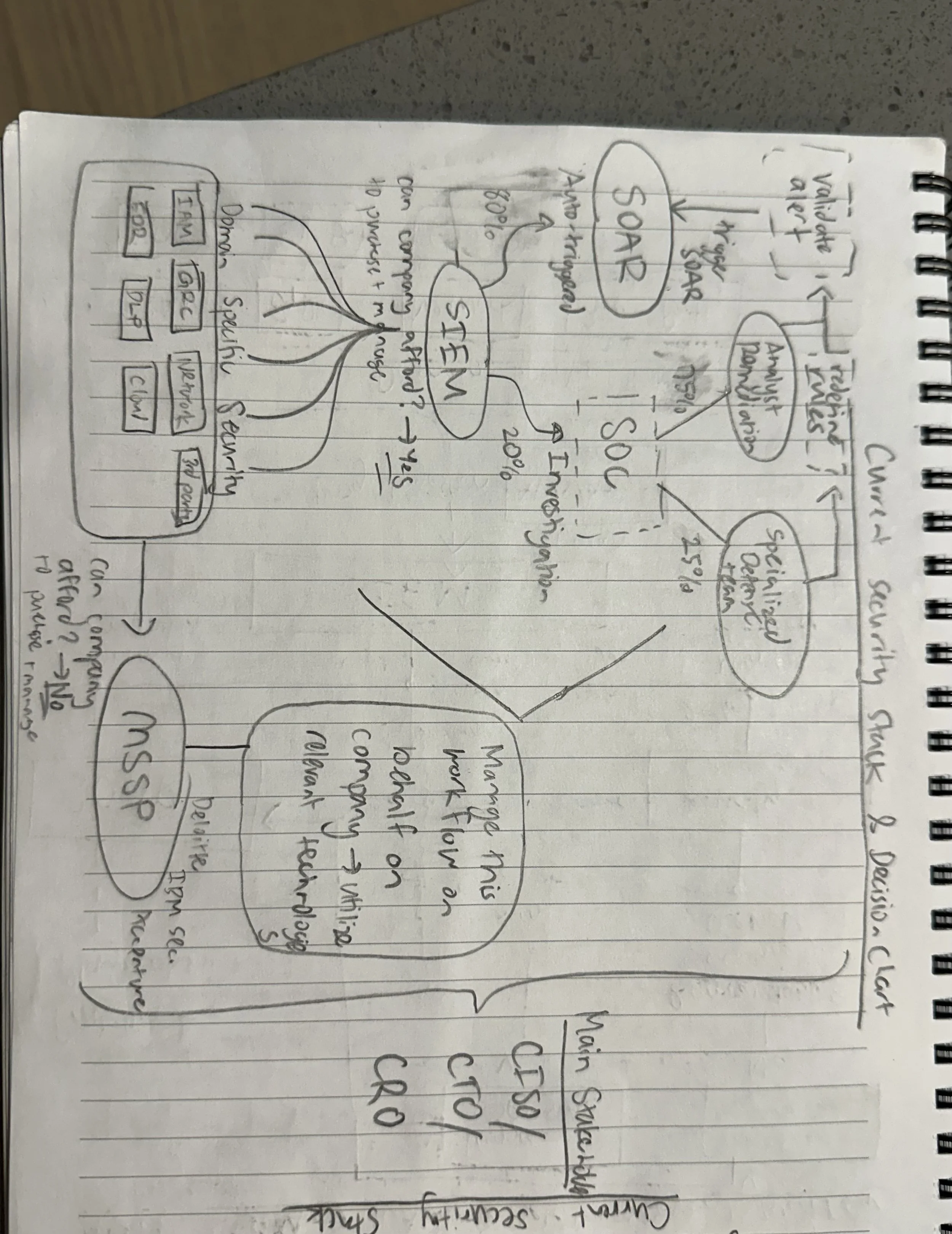

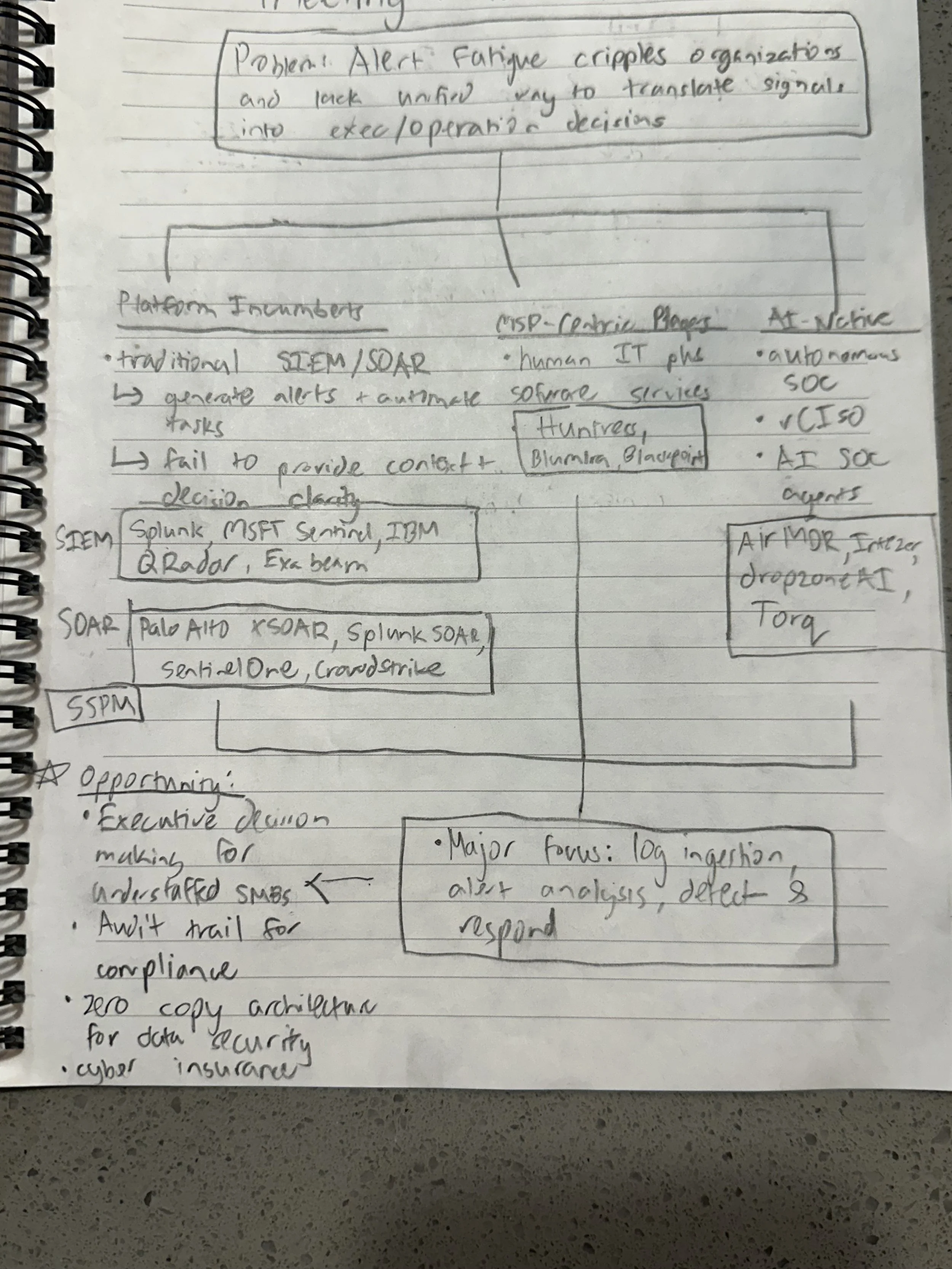

As discussed in previous articles highlighting my research in the agentic SOC space, the problem is crystal clear: enterprises are plagued by tool sprawl, alert fatigue, and inadequate visibility into holistic enterprise security. Creating a centralized, cross-domain, and cross-functional security platform has been a mission of security companies for a while. Splunk (now Cisco) pioneered this when they introduced SIEM (Security Information and Event Management) in the early 2010s, which was followed by numerous incumbents such as Microsoft (Sentinel), Google (Chronicle), etc. also integrating SIEMs throughout the security stack to help supercharge visibility across the security stack. One decade later, however, and the problem around visibility and triage remains, if anything, more complex and problematic then ever before. Agentic AI, and thereby Agentic SOCs, have the opportunity to completely transform the efficacy of most SIEM/tool-based enterprise security stacks. As can be seen above, during the conference, I had the opportunity to speak with five companies/products aiming to transform the AI-SOC space. From my interactions with product managers, engineers, and GTM specialists in the space, there are a few key approaches AI-SOC companies are aiming to deliver on their promise to reduce alert fatigue, MTTD, and MTTR. From my conversations, the true value proposition an agentic-SOC/SIEM can deliver as opposed to traditional security operations are the following four differentiators:

(1) Context & Memory

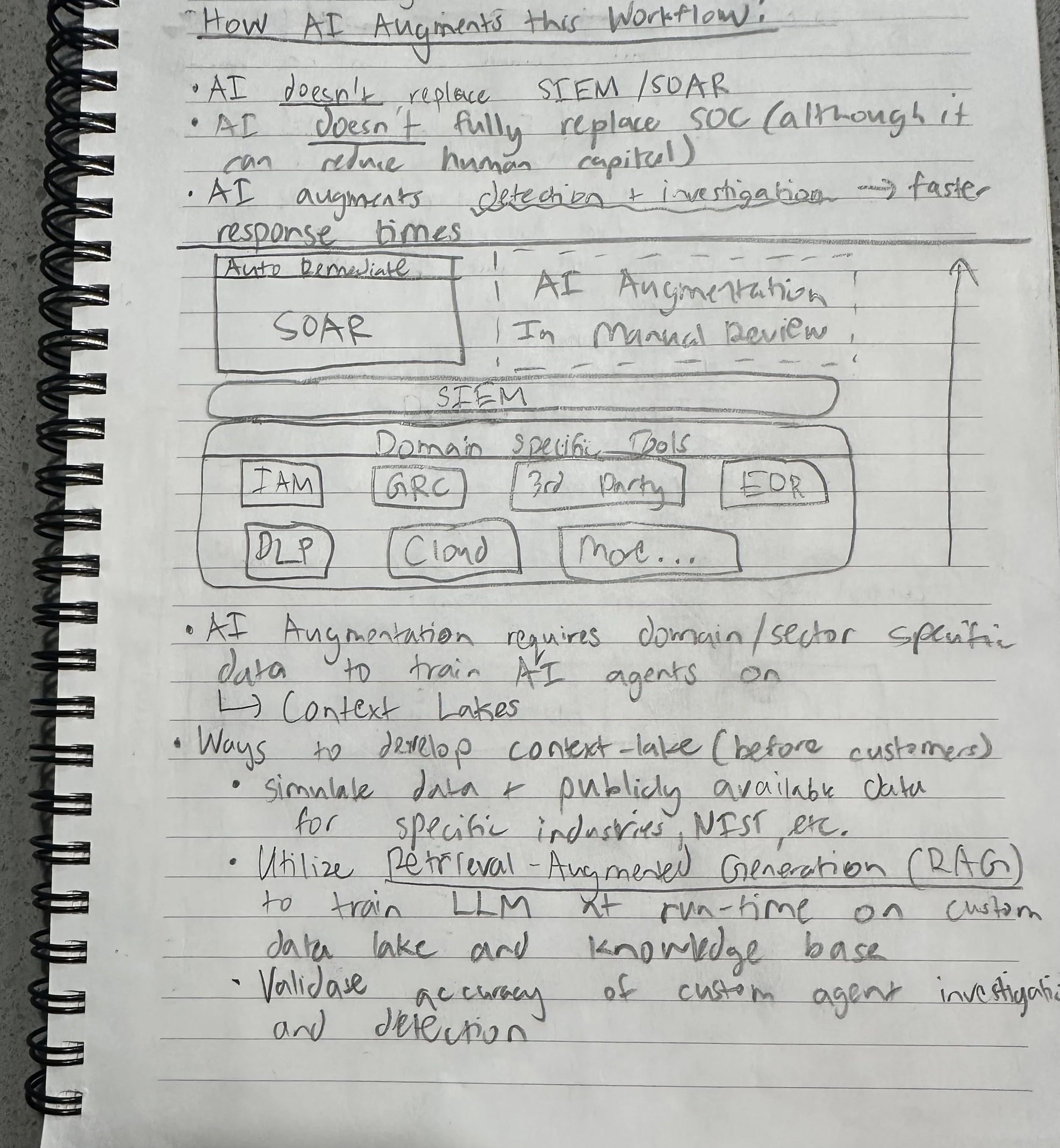

Context is key, and this makes sense when I validated this across major players in the SecOps space. A generic AI trained on broad market data is incapable of effectively triaging a customer’s SOC in a specific industry. Within security itself, major context differences occur across industries. The alerts and risk profiles a financial services customer faces is vastly different from one that a retail enterprise experiences. From my conversations, there are various architectural frameworks companies are using to effectively ensure their agents can properly and accurately traverse their customer’s SOC. Zero-copy architecture mitigates further third party data risks, while enabling AI-SOC agents to effectively triage live queried data without adding to storage (and to an extent, compute) costs. API integrations across a customer’s security stack also enables custom context for agents to work with for specific customers. Essentially, AI-SOC platforms aim to give the customer full control over how much data they can pull when, and how they want their agents to traverse, triage, and remediate it. API integrations directly into existing SIEMs, cloud, and on-prem environments may supercharge SOC analysts when pulling structured event history, and memory layers that allow agents to retain context across an active triage session rather than treating each alert in isolation. Custom context coupled with relevant memory surrounding analyst workflows and triage patterns create a powerful compounding effect: an agent that remembers how a similar lateral movement pattern was resolved three weeks ago is exponentially more useful than one starting from scratch on every alert. This is the power of strong context and memory, which is why AI-native SOCs/SIEMs are consistently fine-tuning their models to resolve this.

(2) Compute & Storage

This insight stemmed from one of the most technically interesting conversations I had during the entire conference when learning about Databricks’ new Lakewatch platform. When learning more about how traditional SIEMs operated, it was interesting to find out a few interesting details. (1) Traditional SIEMs are rule-based and list-based architecturally, making them extremely structured, but also very expensive to traverse and query. (2) Traditional SIEMs don't separate storage and compute, meaning every query is expensive by default. When an enterprise queries a SIEM, they’re essentially iterating through a list, which yields high storage costs, computational inefficiency, and a flood of false positives and negatives that can make an alert functionally useless.

Platforms like Lakewatch detach compute and storage entirely, and this is powerful when coupled with Databricks’ existing value proposition surrounding database querying efficiencies. In addition to cheaper storage and more focused “snapshots”, Lakewatch uses OCSF (Open Cybersecurity Schema Framework), which is a graph-based framework focused on object stores and correlations between various data attributes (Github: About OCSF). When speaking to practioners and developers at Databricks, the benefits of an OCSF-based SIEM framework ranges from more efficient traversals across data sources, which supercharge Lakewatch’s AI-driven investigations across its SIEM lakehouse while also enabling its agents with strong context, memory, and horizontal view across the enterprise SIEM. The benefits from traditional architecture include cheaper storage costs, faster compute, and higher correlative accuracy to pinpoint investigations and identify alerts of true importance.

(3) Triage & Traversal:

This builds right on the two claims I made above. When AI-context is strong and compute/storage are efficient, accurate and timely triage becomes a byproduct success. Frontier models have all showcased their strengths in reasoning when provided proper guardrails and context, making them particularly well-suited for triage on top of well-structured data from zero-copy API integrations or OCSF/graph-based SIEM object stores. Market data shows that AI is strong at recognizing patterns and identifying which signals cluster together into a genuine incident versus which are noise. The combination of strong context and efficient querying/zero copy API calls is what has and will continue to enable this autonomous SOC platforms to successfully deliver value. When I initially began conducting research in the security operations space 7 months ago, I always assumed that triage was the biggest challenge, but I have now quickly come to realize that triage can effectively be solved with strong efficiencies and structure in the backend architecture.

(4) Human Approval & Risk

Now this is one of those areas that is often overlooked, but super important to ensuring strong product-market fit when it comes to autonomous security tools. When conducting market research for LegionSDI, I quickly understood that especially across highly regulated industries such as healthcare and financial services that are risk averse in terms of AI implementation and execution, human-in-the-loop style interfaces are a MUST. From interviewing security practioners, business executives, and CISOs over the past few months, the message is clear: everyone understands that AI must be integrated into their security stack, but everyone is equally worried about hallucinations, blackbox incidents, and most importantly, executions without human approval. From the demos I briefly saw in the AI-SOC/SIEM space, companies understand this as well, and to a degree all have flexibility and controls around how much “automation” a customer may want. More risk averse CISOs may want AI to simply triage but not remediate, while pro-AI executives may be in favor of autonomous remediations. The key in developing a platform in the agentic SOC space is to provide that decision-making power and flexiblity to the customer, rather than assuming their preferences yourself. Flexibility here isn't just a UX and product design consideration, but rather a trust-building mechanism that will determine how aggressively enterprises are willing to hand over autonomy to these systems over time.

Key Takeaways

After spending an entire week on the expo floor and conversing with security professionals from across the world, there are a few things that have become clear to me:

Agentic SOC/SIEM isn’t only a feature the existing security stack, but rather a method to rearchitect the security stack. Companies that are doing this well aren’t only adding AI agents onto existing SIEMs, but are rather reconfiguring the way security stacks operate when it comes to compute, storage, context/memory, and eventually triage.

Human-in-the-loop is more than a feature; it’s a strong GTM strategy for any B2B SaaS tool (security or not) to develop long-lasting trust with executives. Most developers and builders view human-in-the-loop simply as an “accept” or “reject” button when in reality it’s the difference between strong enterprise credibility and the exact type of blackbox narratives George Kurtz mentioned in his speech.

AI inherently accelerates the speed at which both attackers and defenders operate. Context, memory, and backend architectural advantages will serve as the key difference on who will win.

And if you're a 21-year-old who just spent a week getting humbled by the sheer scale of the security industry -- well, parts two and three are already in the works 😀